12 Common Mistakes in Medical Image Annotation

Medical image annotation plays a critical role in the success of AI-powered healthcare solutions. From disease diagnosis and treatment planning to radiology workflow automation and clinical decision support systems, high-quality annotated medical images are the foundation of accurate machine learning models.

However, many healthcare organizations, AI startups, medical imaging companies, and research institutions make costly mistakes during the medical image annotation process. Even small annotation inconsistencies can significantly reduce model accuracy, increase bias, delay regulatory approval, and compromise patient safety.

As artificial intelligence continues to reshape modern healthcare, the demand for precise and scalable medical image annotation services is growing rapidly. Yet achieving reliable annotations requires more than simply labeling images. It demands domain expertise, quality assurance, compliance management, standardized workflows, and advanced annotation strategies.

This article explores the 12 most common mistakes in medical image annotation, their impact on AI model performance, and practical strategies to avoid them.

What Is Medical Image Annotation?

Medical image annotation is the process of labeling medical imaging data to train artificial intelligence and machine learning models. Annotated medical images help AI systems identify diseases, anatomical structures, abnormalities, lesions, tumors, organs, fractures, and other clinical findings.

Medical image annotation is widely used across healthcare sectors, including:

- Radiology

- Oncology

- Cardiology

- Neurology

- Ophthalmology

- Pathology

- Orthopedics

- Dermatology

- Surgical robotics

- Telemedicine

Common medical imaging modalities include:

- X-rays

- MRI scans

- CT scans

- Ultrasound images

- PET scans

- Mammograms

- Histopathology slides

- Retinal scans

- Endoscopy videos

Medical image annotation tasks may involve:

- Bounding boxes

- Semantic segmentation

- Polygon annotation

- Keypoint annotation

- Landmark annotation

- 3D volumetric annotation

- Instance segmentation

- Pixel-wise segmentation

- Classification tagging

High-quality annotation directly affects AI model precision, recall, sensitivity, and overall diagnostic performance.

Why Accuracy in Medical Image Annotation Matters

In healthcare AI, annotation errors can lead to severe consequences. Unlike general image annotation projects, medical datasets require exceptional precision because incorrect labels can affect real-world patient outcomes.

Poor annotations can result in:

- Misdiagnosis by AI systems

- False positives or false negatives

- Reduced clinical trust in AI

- Increased training costs

- Regulatory compliance issues

- Biased machine learning models

- Delayed product deployment

- Poor model generalization

Healthcare AI models are only as reliable as the annotated datasets used to train them.

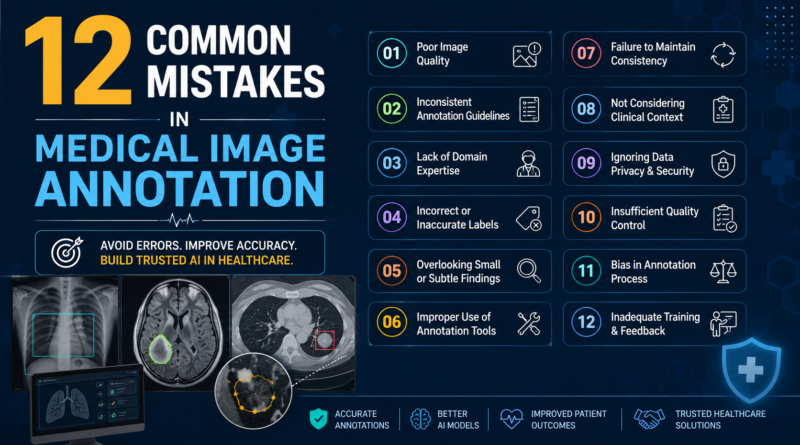

12 Common Mistakes in Medical Image Annotation

1. Using Non-Expert Annotators for Complex Medical Data

One of the most common mistakes in medical image annotation is assigning annotation tasks to non-medical personnel without clinical expertise.

Medical images are highly specialized. Identifying tumors, lesions, fractures, or subtle anatomical abnormalities often requires extensive medical training. Generic annotators may fail to recognize clinically important patterns.

Why This Is a Problem

- Inaccurate labeling of diseases

- Missed pathological findings

- Poor segmentation boundaries

- Reduced diagnostic AI performance

- Increased model bias

For example, annotating lung nodules in CT scans or brain tumors in MRI images requires radiology expertise. Even experienced general annotators may not understand nuanced imaging characteristics.

Best Practice

Healthcare organizations should collaborate with trained radiologists, pathologists, clinicians, and medical imaging specialists.

Working with experienced providers offering specialized medical annotation expertise significantly improves dataset quality. Businesses seeking scalable and accurate healthcare annotation solutions often rely on professional providers such as https://www.oursglobal.com/outsource-image-annotation-services for high-quality annotation workflows.

2. Lack of Standardized Annotation Guidelines

Without standardized annotation protocols, different annotators may interpret medical findings inconsistently.

For instance:

- One annotator may classify a lesion as malignant

- Another may classify the same lesion as benign

- Segmentation boundaries may vary dramatically

- Organ labeling may become inconsistent

This inconsistency creates noisy datasets that confuse AI models.

Common Causes

- Poor project documentation

- Unclear labeling instructions

- Undefined edge cases

- Missing clinical definitions

- No annotation consensus process

Best Practice

Develop comprehensive annotation guidelines that include:

- Label definitions

- Disease classification criteria

- Segmentation rules

- Anatomical references

- Edge-case handling

- Quality benchmarks

- Examples of correct annotations

Standardization improves annotation consistency and model reliability.

3. Ignoring Inter-Annotator Agreement

Inter-annotator agreement measures how consistently multiple experts annotate the same medical images.

Many organizations overlook this critical quality metric.

When annotation agreement is low, datasets become unreliable.

Consequences of Poor Agreement

- Dataset inconsistency

- Model confusion during training

- Reduced AI accuracy

- Higher false detection rates

- Unstable model performance

Best Practice

Organizations should regularly evaluate:

- Cohen’s Kappa score

- Dice similarity coefficient

- Intersection over Union (IoU)

- Consensus review workflows

Disagreements should be resolved through expert adjudication.

4. Poor Quality Control Processes

Medical annotation projects require rigorous quality assurance procedures.

Unfortunately, many companies prioritize speed over quality.

Insufficient QA processes often lead to:

- Missing labels

- Incorrect segmentation

- Duplicate annotations

- Inconsistent classifications

- Annotation drift over time

Best Practice

A strong quality control pipeline should include:

- Multi-level reviews

- Expert verification

- Automated validation checks

- Random sample audits

- Continuous performance monitoring

- Annotation correction loops

High-quality medical datasets demand continuous validation.

5. Inadequate Handling of Edge Cases

Medical imaging datasets frequently contain rare conditions, unusual anatomy, imaging artifacts, or overlapping diseases.

Ignoring these edge cases can severely limit AI model generalization.

Examples of Edge Cases

- Rare tumors

- Motion artifacts

- Uncommon fractures

- Multiple disease coexistence

- Pediatric anatomical variations

- Low-quality scans

- Contrast enhancement abnormalities

Why This Matters

AI systems trained only on ideal datasets may fail in real clinical environments.

Best Practice

Include:

- Diverse patient populations

- Rare disease samples

- Multi-center imaging datasets

- Different scanner types

- Various image qualities

Robust datasets improve AI reliability across real-world healthcare settings.

6. Insufficient Dataset Diversity

Bias in medical datasets is a growing challenge in healthcare AI.

Many datasets lack diversity across:

- Age groups

- Ethnic backgrounds

- Geographic regions

- Imaging devices

- Disease stages

- Clinical environments

This can cause AI models to perform poorly for underrepresented populations.

Consequences

- Reduced fairness in healthcare AI

- Diagnostic disparities

- Poor generalization

- Regulatory concerns

- Clinical adoption barriers

Best Practice

Build diverse datasets that reflect real-world patient demographics.

Diverse annotation strategies improve fairness and inclusivity in AI systems.

7. Incorrect Segmentation Boundaries

Medical image segmentation is one of the most challenging annotation tasks.

Small boundary errors can significantly affect AI model accuracy.

Common Segmentation Problems

- Incomplete tumor outlines

- Over-segmentation

- Under-segmentation

- Inconsistent pixel labeling

- Missing lesion regions

Why Segmentation Accuracy Matters

In applications like cancer detection, surgical planning, and organ analysis, precise boundaries are essential.

Best Practice

Use:

- Expert-reviewed segmentation workflows

- Advanced annotation platforms

- Consensus validation

- Pixel-level QA checks

- AI-assisted segmentation refinement

Precise segmentation directly improves deep learning performance.

8. Failure to Protect Patient Data Privacy

Medical imaging datasets often contain sensitive patient information.

Many organizations underestimate the importance of healthcare data privacy regulations.

Compliance Risks

Failure to comply with regulations may lead to:

- Legal penalties

- Data breaches

- Reputational damage

- Regulatory investigations

- Loss of patient trust

Important Compliance Standards

- HIPAA

- GDPR

- HITECH

- FDA regulations

- Local healthcare privacy laws

Best Practice

Implement:

- Data anonymization

- De-identification protocols

- Secure data transfer

- Access controls

- Encryption standards

- Compliance audits

Patient privacy should remain a top priority in all medical annotation workflows.

9. Overlooking Annotation Tool Limitations

Not all annotation tools are suitable for medical imaging projects.

Generic annotation software may lack advanced capabilities required for healthcare datasets.

Common Tool Limitations

- Poor DICOM support

- Limited 3D annotation features

- Weak collaboration workflows

- Inaccurate segmentation tools

- Insufficient QA capabilities

- Lack of AI-assisted labeling

Best Practice

Choose medical-grade annotation platforms that support:

- DICOM imaging

- Multi-slice visualization

- 3D volumetric annotation

- AI-assisted labeling

- Cloud collaboration

- Audit tracking

- HIPAA compliance

The right tools improve both efficiency and annotation quality.

10. Relying Too Much on Automated Annotation

AI-assisted annotation can improve productivity, but overreliance on automation creates risks.

Automated systems can generate:

- Incorrect labels

- Poor segmentation

- Missed abnormalities

- Systematic bias

Why Human Oversight Matters

Healthcare AI requires clinical validation.

Fully automated annotation pipelines without expert review can introduce large-scale errors into training datasets.

Best Practice

Use a human-in-the-loop approach:

- AI generates preliminary annotations

- Medical experts review outputs

- Corrections are validated

- Final QA checks ensure quality

Combining automation with expert oversight delivers better scalability and accuracy.

11. Inconsistent Annotation Across Imaging Modalities

Different imaging modalities require different annotation approaches.

A labeling strategy suitable for X-rays may not work effectively for MRI or CT scans.

Common Problems

- Modality-specific misinterpretation

- Inconsistent labeling standards

- Poor cross-modality alignment

- Inaccurate anatomical references

Best Practice

Create modality-specific annotation workflows for:

- MRI imaging

- CT imaging

- Ultrasound imaging

- Pathology slides

- Mammography

- Retinal imaging

Specialized workflows improve annotation precision.

12. Ignoring Continuous Dataset Maintenance

Medical datasets are not static.

Clinical standards evolve, imaging technologies improve, and disease classifications change over time.

Many organizations fail to maintain and update annotated datasets.

Consequences

- Outdated labels

- Reduced model relevance

- Lower diagnostic accuracy

- Poor adaptation to new technologies

Best Practice

Maintain continuous dataset improvement through:

- Periodic dataset reviews

- Annotation updates

- Model retraining

- Clinical validation cycles

- Continuous quality audits

Long-term dataset maintenance ensures AI systems remain clinically effective.

Challenges in Medical Image Annotation

Medical image annotation involves several unique challenges compared to standard image labeling projects.

Complex Anatomy

Human anatomy is highly detailed and varies significantly between patients.

High Annotation Costs

Medical experts command higher compensation due to specialized knowledge.

Time-Intensive Workflows

Pixel-level segmentation and 3D annotations require substantial time investment.

Regulatory Complexity

Healthcare AI projects must comply with strict regulatory standards.

Data Scarcity

Rare diseases often lack sufficient annotated datasets.

Imaging Variability

Differences in imaging equipment, scan quality, and acquisition protocols create additional complexity.

Best Practices for High-Quality Medical Image Annotation

Organizations can improve annotation quality by following proven best practices.

Use Medical Experts

Collaborate with radiologists, clinicians, pathologists, and domain specialists.

Create Detailed Annotation Protocols

Document every annotation rule clearly.

Implement Multi-Level Quality Assurance

Use layered review systems and consensus validation.

Leverage AI-Assisted Annotation Carefully

Combine automation with human oversight.

Maintain Dataset Diversity

Include diverse patient demographics and imaging sources.

Prioritize Compliance and Security

Protect patient data throughout the annotation lifecycle.

Continuously Improve Datasets

Regularly review, update, and refine annotation quality.

The Growing Importance of Medical Image Annotation in AI Healthcare

The healthcare AI market is rapidly expanding.

Medical image annotation supports innovations in:

- Early disease detection

- Cancer diagnosis

- Surgical planning

- Clinical decision support

- Drug discovery

- Personalized medicine

- Telemedicine platforms

- Robotic surgery

- Digital pathology

As AI adoption accelerates, healthcare organizations increasingly require scalable, accurate, and compliant annotation services.

Professional annotation providers help businesses manage:

- Large-scale datasets

- Expert-led workflows

- Quality assurance

- Regulatory compliance

- Faster AI development cycles

Companies looking to scale healthcare AI initiatives often partner with specialized medical annotation providers such as https://www.oursglobal.com/outsource-image-annotation-services to improve data quality and operational efficiency.

Future Trends in Medical Image Annotation

The future of medical image annotation will be shaped by technological advancements and increasing AI adoption.

AI-Assisted Annotation

Semi-automated labeling tools will reduce annotation time while improving productivity.

3D and Volumetric Annotation

Advanced imaging applications will require more sophisticated 3D annotation capabilities.

Federated Learning

Privacy-preserving AI training approaches will gain importance.

Synthetic Medical Data

Synthetic image generation will help address data scarcity challenges.

Multi-Modal AI Models

Future systems will combine imaging, clinical notes, genomics, and pathology data.

Real-Time Annotation Systems

Interactive AI-assisted diagnostic workflows will become increasingly common.

Conclusion

Medical image annotation is one of the most important components of healthcare AI development. However, even advanced AI systems can fail if annotation workflows contain errors, inconsistencies, bias, or poor-quality data.

By avoiding these 12 common mistakes, healthcare organizations can significantly improve AI model performance, reduce operational risks, accelerate deployment timelines, and enhance patient outcomes.

Successful medical image annotation requires:

- Clinical expertise

- Standardized workflows

- Strong quality assurance

- Secure data management

- Continuous dataset improvement

- Advanced annotation technologies

As the healthcare industry continues embracing AI-driven innovation, investing in accurate and scalable medical image annotation processes will remain essential for building reliable, ethical, and clinically effective AI systems.

Organizations aiming to achieve high-quality healthcare AI outcomes should prioritize expert-driven annotation strategies and trusted annotation partners capable of delivering precision, compliance, and scalability.